There is a quiet rumor rippling through the tech world: large language models belong inside enterprise software workflows the way a solo instrument belongs in a tightly conducted orchestra. The instrument may be brilliant on its own, but the orchestra does not care about solo brilliance; it demands timing, structure, and harmony with everyone else on stage.

The truth is harsher and a little more elegant: LLMs are deeply personal tools, not corporate processes.

They flourish in one-on-one dynamics: one person, one prompt, one evolving context, because that is how they are designed. Every keystroke, every revision, and every outcome aligns with a single mind and a singular goal. That is not how teams operate, and it is not how enterprises maintain velocity over years of custodianship and scale.

The Enterprise Contradiction

Call it a flaw if you must, but LLMs are crafted as intimate assistants. They adapt to your phrasing, your intent, and your iteration style. Its power feels almost unfairly acute to solo developers who can flow from thought to draft to ship in a single breath. The procession is simple: think, prompt, adjust, ship. There is no formal alignment ceremony. There is no committee of stakeholders negotiating every fugitive inference or ambiguous suggestion. There is merely the moment of creation, where the model mirrors your mind.

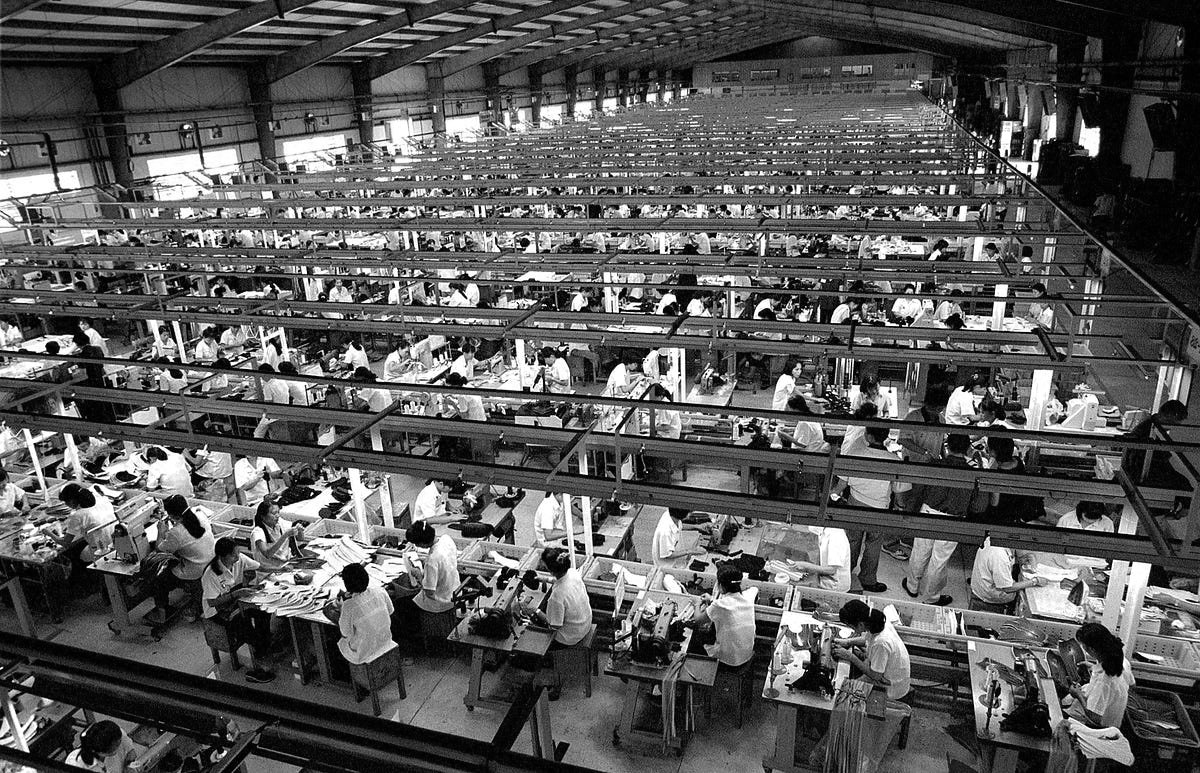

Drop the same tool into a corporate setting and the mechanics disappear. Enterprises rely on shared understanding, consistency across teams, long term maintainability, and explicit decision making. LLMs, by contrast, thrive on implicit context, probabilistic outputs, and self-directed steering. The gap is not small. It is fundamental, a mismatch not of degree but of kind. The enterprise model depends on a stable baseline that multiple hands can align to over time while the LLM depends on a moving target of personal context that refuses to be codified without breaking.

The Forced Ecosystem

To coax these tools into service, we layer on a support structure that grows heavier by the day: Prompt guidelines, AI usage policies, Context injection systems, Rule files and constraints, AI assisted reviews, and many more. All of these become a disciplined scaffold around a tool that resists discipline.

Even when teams standardize on copilots and cursors, the outputs remain inconsistent, style divergent, and context fragmented. Because each developer still works inside a private loop with the model, two engineers can solve the same problem in two entirely different, perfectly valid ways. That is not collaboration, that is parallel individualism masquerading as a system. A true team is more than a gallery of individual sparks. It is a workshop where sparks fuse into a coherent blaze. The current pattern tends to preserve the sparks without fusing them.

The Layoff "Narrative"

Across the industry you will hear a refrain about layoffs attributed to AI. In many cases, AI is not the true cause, it is the convenient narrative used to justify cost cutting. Yet a more subtle trend is taking shape. Some organizations are beginning to believe that if human–AI collaboration is messy, perhaps we should remove the messy part. The danger is not that the AI is failing, but that we are failing to design around it. When performance is measured by speed, output, and surface level productivity, the human can appear slow and unreliable. The reflex is to prune the human from the loop rather than to prune the system for coherence and clarity.

This began when we started naming these systems agents. The term feels powerful and marketable. It also quietly shifts the problem from collaboration to autonomy. An agent suggests independence, decision making, reduced human intervention. That is the opposite of what enterprise ecosystems need. The struggle we already have is a lack of shared alignment. So what happens when we give the system more freedom to act beyond a well understood framework? We get more autonomy, and we amplify the misalignment rather than solving it.

Have We Seen This Before?

Software development itself began as an act of solitary concentration. One developer, one machine, one mental model. It was never supposed to be magically collaborative. Yet over time we layered on structures that made collaboration possible: version control, code reviews, style guides, architectural patterns, team conventions. These did not erase individuality, they contained it. They created just enough shared structure to let many individuals build one system. The trick was not to erase the solo act but to harness it within a disciplined ecosystem. And Now, we are not designing systems around AI. We are injecting AI straight into systems built for human work. And with the agent concept, we are granting it even more autonomy instead of building the right scaffolding. The consequence is not innovation, it is drift.

What Should We Do

Like any good software engineer: Do not force alignment at the output level alone. Design alignment at the system level. Define strict boundaries between what is human and what is not. Create shared constraints that shape outputs before they are produced. Limit autonomy rather than expanding it. Treat AI as a controlled instrument, not an independent actor. Do not make AI a teammate or an agent. Instead, make it a constrained tool inside a well defined system. It is not about coaxing the tool to act like a good colleague, it is about building a system where it can contribute without destabilizing the whole.

LLMs did not fail enterprises. The misinterpretation did. Some practices mask cost cutting as innovation, replace people instead of fixing systems, and grant artificial autonomy while ignoring the need for tighter governance. That is not a triumph of AI, it is a tutorial on how not to scale responsibly.

The spark of individual brilliance does not vanish in a team setting, it gets channeled. The same logic should apply to AI. Let us not turn the future into a collection of neat, autonomous agents. Let us build robust systems where AI is a tool, not a ruler, and collaboration means coordinated, not coerced, progress.

Opinionated Code

Opinionated Code